Osiyo. Dohiju? ᎣᏏᏲ. ᏙᎯᏧ? Hey, welcome back.

This last week I’ve devoted an extraordinary amount of time to the GUI and HUD that make up what I want SERINDA to do and be like. I implemented my own Three.js and LeapMotion display coupled with OpenCV. I wanted one server to do all of the work I needed. I’m figuring out the extent of the usable boundaries of the LeapMotion.

Threejs uses WebGL, the web version of OpenGL, for rendering. All of the years I worked with OpenGL and JMonkeyEngine GUIs and JMonkeyEngine for game development have helped me get to this point. A lot of my work on Microsoft Flight Simulator glass cockpits written in OpenGL also comes into play quite nicely. Also, if you’ve never explored the NeHe Tutorials they are a must to getting started in OpenGL. Threejs has a fundamentals link that is similar and provides a lot of examples to work with.

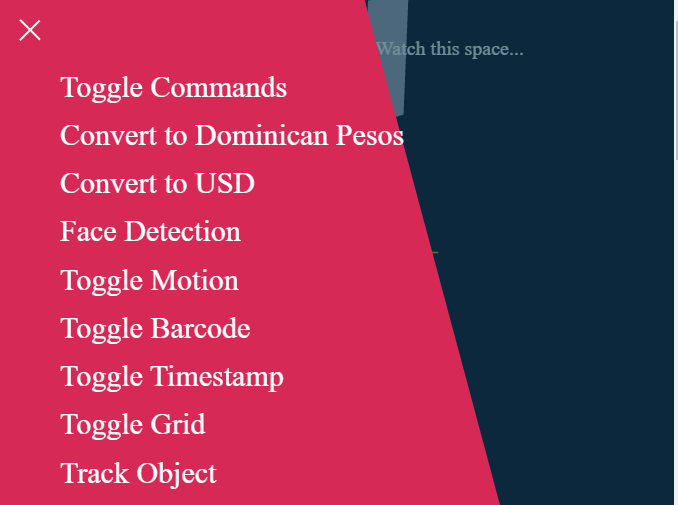

This week I have a couple goals to accomplish. The first is to take the Threejs work I’ve done and make it into an interactive HUD/GUI. I have display items that I want to make interactive and semi transparent. The idea is that, like the Triton Launcher has the Triton Palm (example), I can have elements that are in the field of view (FOV) and touch them, stretch them, in some cases move them, and more. An example would be the current hamburger menu implementation.

In the screenshots below, you can see the hamburger menu on the upper left side. When you click on it you see the menu pop out.

In the HUD/GUI you would see something like the hamburger menu then you tap on it with your hand and the menu you want to see displays and you can select an item from it. This could even be done with a Hiro or Aruco marker on a flat device. Luckily, there are two libraries that help with this. One is the Leap-widgets project. The other is the Leap Controls project.

There are also non-interactive HUD/GUI elements that will just display over objects giving data only. There are also elements I need to work on that combine a bit of both. Like the ability to hold your hand up and see a 3d view screen or look at your wrist and see a menu. These would be the same as say looking at your fridge and seeing the current inventory. I’ve spent a long time thinking of what I want and how I want to do it. I’ll try to get a designer to work with me and come up with guidelines and designs.

Let’s get into the nuts and bolts a little.

The DOF sensor will give us X, Y, Z, and various acceleration outputs. I have both a 6 and 9DOF breakout board. I’ll use the 9. Think of this data as orientation in the world, where you are, and which direction you’re moving in any of the 360 degrees around you in all directions. Now, as with VR, if you have a 3D scene and you have your sensor on tied to your camera and you don’t move then your view will be relatively the same with minor fluctuations. If you tilt or rotate your head you’ll see various aspects of the scene revealed just the same as if you were in an FPS. The data that the DOF sensor gives us will be given to the Threejs scene in a way it can understand. This means we read the X, Y, and Z (for now we’ll just deal with these values) and add them to our camera xyz then we get a new location for the camera. If you think of this like using the keys WSAD. W moves forward. Instead of using the W, we use the +Z. A is left and -X. D is right and +x. A is backwards and -Z. We don’t have a Y for the moment. This sensor will replace the mouse and keys for moving in 3d space. Where rotating your head will be like moving the mouse to move the view in an FPS so you can bring something into view. Now all of this can be done the same way as you would in VR, with your head.

Really this week is going to be:

- To combine the data output from the 9DOF with the Threejs scene

- Adding some objects out of view to be able to look at

- Adding some HUD/GUI objects that remain with the viewer

- Adding some HUD/GUI objects that remain with an object.

I have three tests for this:

- With objects to the left and right just off camera (OC)

- Add HUD/GUI items that remain at a constant location in my field of view (FOV)

- Add HUD/GUI items to an object (via OpenCV detection)

Each of these rely on testing with the 9DOF sensor. Turning head left and right should bring the items to the left and right into view. Also, not changing the “static” HUD/GUI I see. Adding the HUD/GUI to an object then when I move around in the world the view should change (depending on if the object is billboarded or not).

I think these are sufficient tests for this.

Now, there are other complications that can arise. If I place an object in the scene then it’s location may not be remembered. Such as, placing a cube in the living room and then restarting the app in the kitchen the cube may move to the kitchen. This would have to be solved by another method. However, if the HUD/GUI is attached to a specific object then every time that object comes into view if that HUD/GUI is on then it should display as expected.

Which brings up the next item to consider. Since SERINDA is voice and visual you can turn on or off these items as configurable. For example, maybe every time you look at the fridge it shows the list of contents. But you work at the dining room table for a day and every time you look up you see the fridge which shows that list. You can turn it off for x amount of time or indefinitely. Maybe you can assign a gesture. You look at the fridge and it has a horizontal bar. you swipe that bar down and it shows you the list. After a few seconds that list view closes.

There are many considerations to this. It’s an extremely exciting world to be in.

Until next time. Dodadagohvi. ᏙᏓᏓᎪᎲᎢ.